Tech Report | Approach | Results | TensorFlow | Other Implementations | Bibtex

Update (Apr, 2020): We have released a reloaded version with full training scripts in PyTorch and pre-trained models.

Update (Oct, 2018): We have re-implemented WDSR on TensorFlow for end-to-end training and testing. Pre-trained models are released. The runtime speed of weight normalization on tensorflow is also optimized.

- Requirements:

- Install PyTorch (tested on release 0.4.0 and 0.4.1).

- Clone EDSR-Pytorch as backbone training framework.

- Training and Validation:

- Copy wdsr_a.py, wdsr_b.py into

EDSR-PyTorch/src/model/. - Modify

EDSR-PyTorch/src/option.pyandEDSR-PyTorch/src/demo.shto support--n_feats, --block_feats, --[r,g,b]_meanoption (please find reference in issue #7, #8). - Launch training with EDSR-Pytorch as backbone training framework.

- Copy wdsr_a.py, wdsr_b.py into

- Still have questions?

- If you still have questions, please first search over closed issues. If the problem is not solved, please open a new issue.

| Network | Parameters | DIV2K (val) PSNR |

|---|---|---|

| EDSR Baseline | 1,372,318 | 34.61 |

| WDSR Baseline | 1,190,100 | 34.77 |

We measured PSNR using DIV2K 0801 ~ 0900 (trained on 0000 ~ 0800) on RGB channels without self-ensemble. Both baseline models have 16 residual blocks.

More results:

| Number of Residual Blocks | 1 | 3 | ||||

|---|---|---|---|---|---|---|

| SR Network | EDSR | WDSR-A | WDSR-B | EDSR | WDSR-A | WDSR-B |

| Parameters | 0.26M | 0.08M | 0.08M | 0.41M | 0.23M | 0.23M |

| DIV2K (val) PSNR | 33.210 | 33.323 | 33.434 | 34.043 | 34.163 | 34.205 |

| Number of Residual Blocks | 5 | 8 | ||||

|---|---|---|---|---|---|---|

| SR Network | EDSR | WDSR-A | WDSR-B | EDSR | WDSR-A | WDSR-B |

| Parameters | 0.56M | 0.37M | 0.37M | 0.78M | 0.60M | 0.60M |

| DIV2K (val) PSNR | 34.284 | 34.388 | 34.409 | 34.457 | 34.541 | 34.536 |

Comparisons of EDSR and our proposed WDSR-A, WDSR-B using identical settings to EDSR baseline model for image bicubic x2 super-resolution on DIV2K dataset.

Left: vanilla residual block in EDSR. Middle: wide activation. Right: wider activation with linear low-rank convolution. The proposed wide activation WDSR-A, WDSR-B have similar merits with MobileNet V2 but different architectures and much better PSNR.

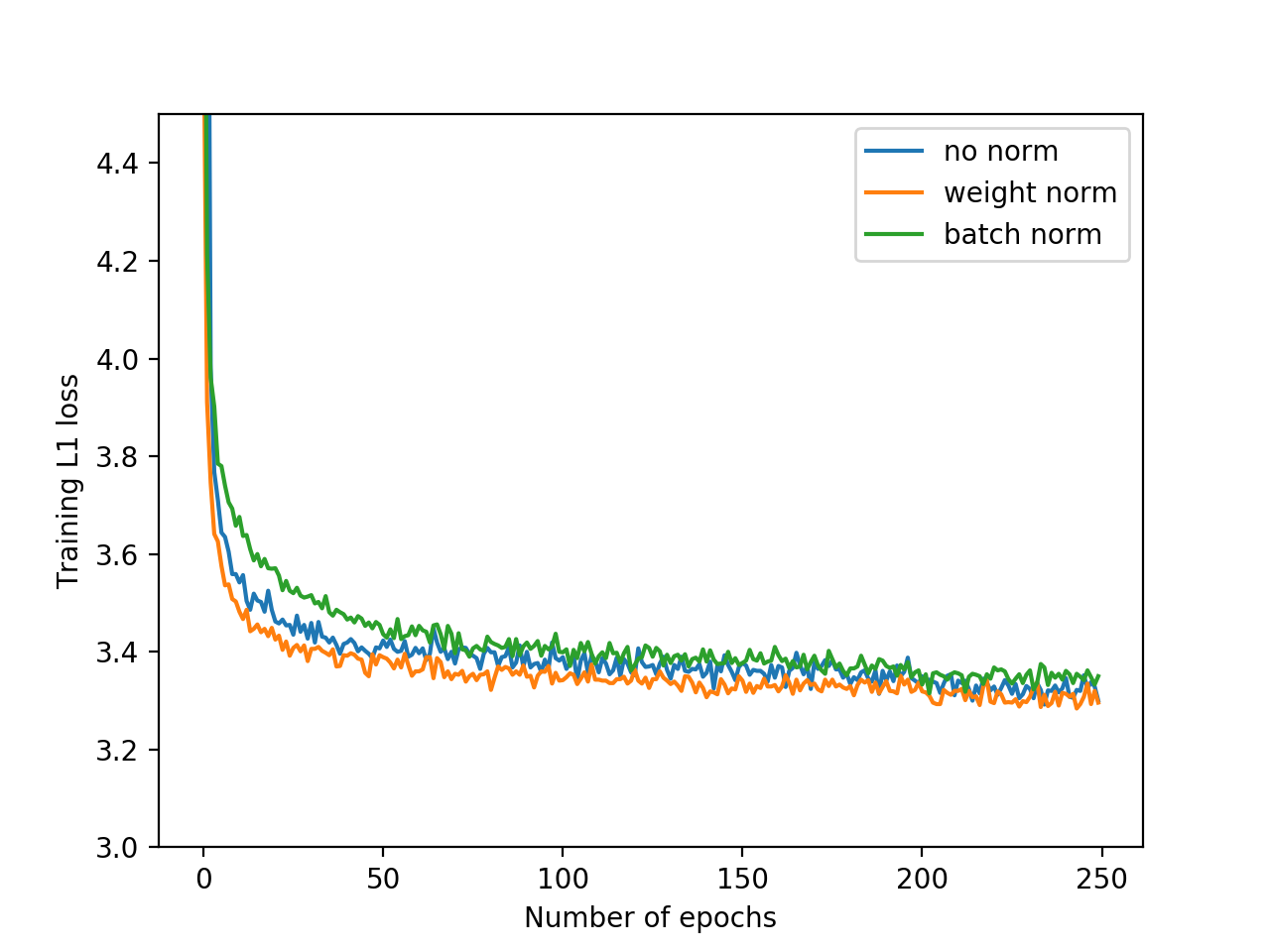

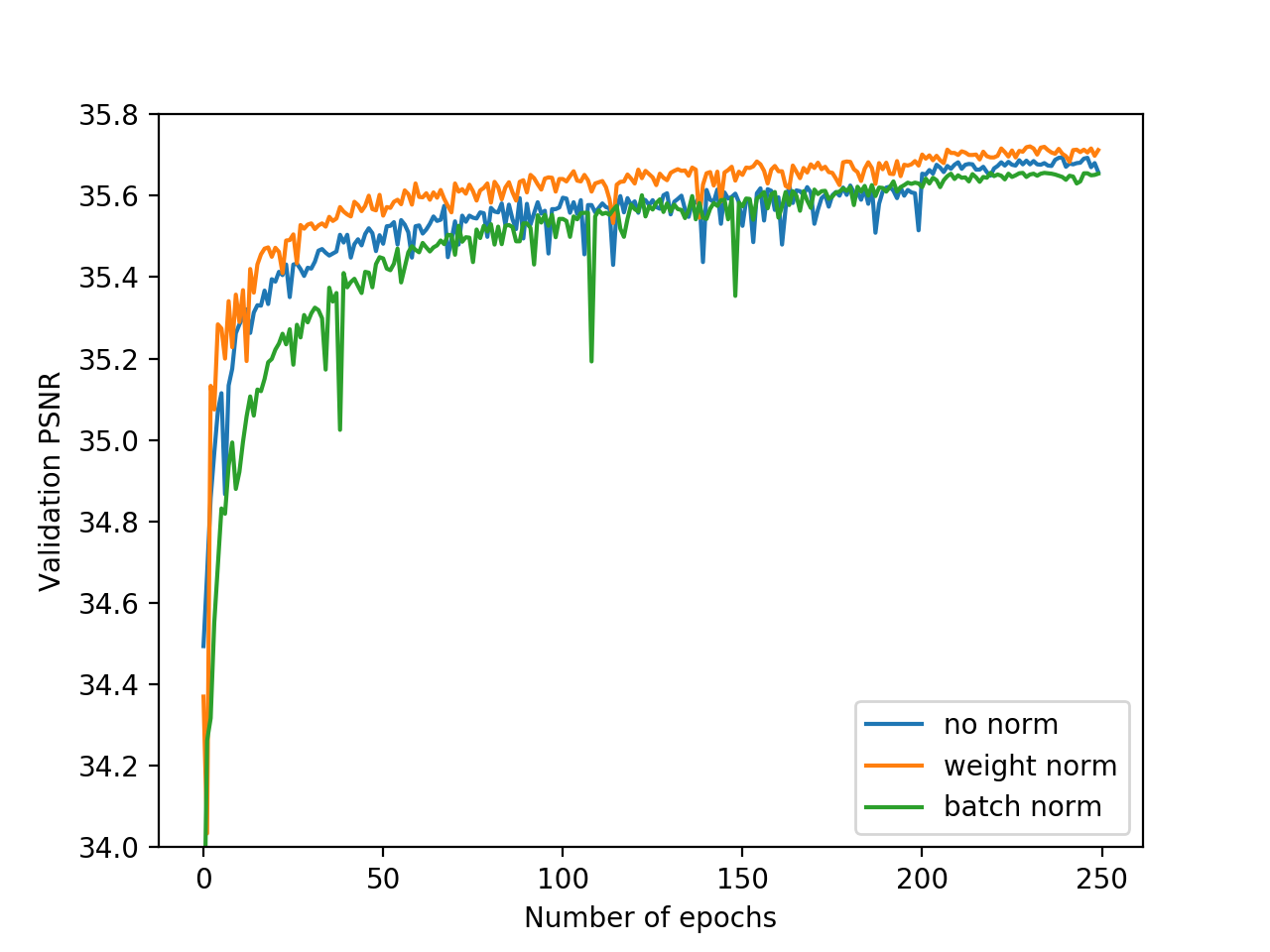

Training loss and validation PSNR with weight normalization, batch normalization or no normalization. Training with weight normalization has faster convergence and better accuracy.

- TensorFlow-WDSR (official)

- Keras-WDSR By Martin Krasser

Please consider cite WDSR for image super-resolution and compression if you find it helpful.

@article{yu2018wide,

title={Wide Activation for Efficient and Accurate Image Super-Resolution},

author={Yu, Jiahui and Fan, Yuchen and Yang, Jianchao and Xu, Ning and Wang, Xinchao and Huang, Thomas S},

journal={arXiv preprint arXiv:1808.08718},

year={2018}

}

@inproceedings{fan2018wide,

title={Wide-activated Deep Residual Networks based Restoration for BPG-compressed Images},

author={Fan, Yuchen and Yu, Jiahui and Huang, Thomas S},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops},

pages={2621--2624},

year={2018}

}