Hi there! This guide is for you:

- You're new to Machine Learning.

- You know Python. (At least the basics! If you want to learn Python, try Dive Into Python.)

I learned Python by hacking first, and getting serious later. I wanted to do this with Machine Learning. If this is your style, join me in getting a bit ahead of yourself.

Note: There are several fields within "Data" and Machine Learning is just one. It's good to know the context: What is the difference between Data Analytics, Data Analysis, Data Mining, Data Science, Machine Learning, and Big Data?

I suggest you get your feet wet ASAP. You'll boost your confidence.

- Python. Python 3 is the best option.

- IPython and the Jupyter Notebook. (FKA IPython and IPython Notebook.)

- Some scientific computing packages:

- numpy

- pandas

- scikit-learn

- matplotlib

You can install Python 3 and all of these packages in a few clicks with the Anaconda Python distribution. Anaconda is popular in Data Science and Machine Learning communities.

If you're using Python 2.7, don't worry. You don't have to migrate to Python 3 just for this guide. Also, if you're using pip/virtualenv instead of Anaconda, that's alright too! Confer conda vs. pip vs. virtualenv if you're curious.

Learn how to use IPython Notebook (5-10 minutes). (You can learn by screencast instead.)

Now, follow along with this brief exercise (10 minutes): An introduction to machine learning with scikit-learn. Do it in ipython or IPython Notebook. It'll really boost your confidence.

You just classified some hand-written digits using scikit-learn. Neat huh?

scikit-learn is the go-to library for machine learning in Python. Some recognizable logos use it, including Spotify and Evernote. Machine learning is hard. You'll be glad your tools are easy to work with.

I encourage you to look at the scikit-learn homepage and spend about 5 minutes looking over the names of the strategies (Classification, Regression, etc.), and their applications. Don't click through yet! Just get a glimpse of the vocabulary.

Let's learn a bit more about Machine Learning, and a couple of common ideas and concerns. Read "A Visual Introduction to Machine Learning, Part 1" by Stephanie Yee and Tony Chu.

It won't take long. It's a beautiful introduction ... Try not to drool too much!

OK. Let's dive deeper.

Read "A Few Useful Things to Know about Machine Learning" by Pedro Domingos. It's densely packed with valuable information, but not opaque. The author understands that there's a lot of "black art" and folk wisdom, and they invite you in.

Take your time with this one. Take notes. Don't worry if you don't understand it all yet.

The whole paper is packed with value, but I want to call out two points:

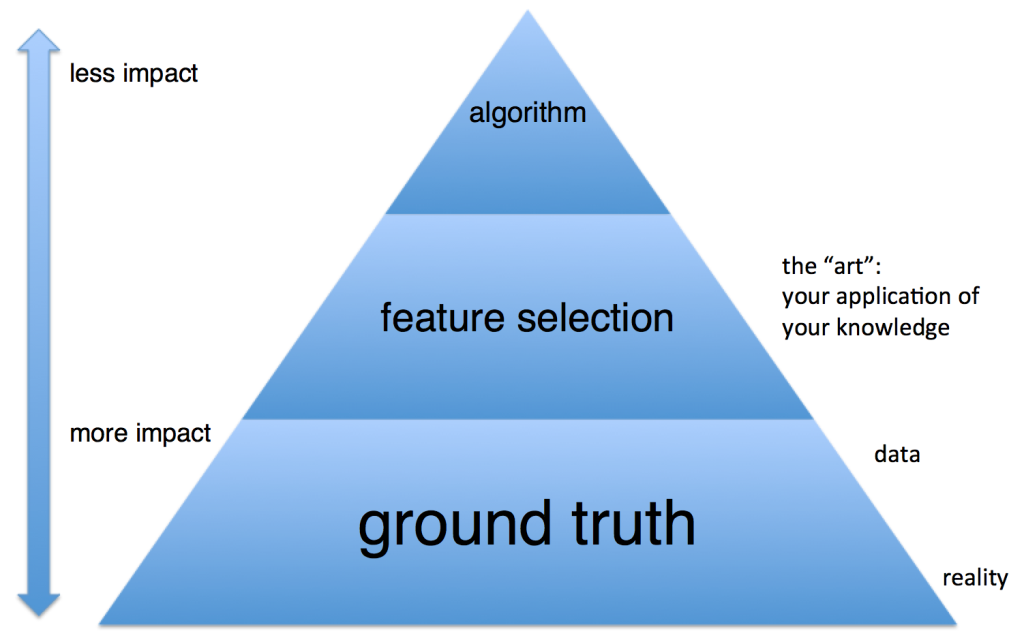

- Data alone is not enough. This is where science meets art in machine-learning. Quoting Domingos: "... the need for knowledge in learning should not be surprising. Machine learning is not magic; it can’t get something from nothing. What it does is get more from less. Programming, like all engineering, is a lot of work: we have to build everything from scratch. Learning is more like farming, which lets nature do most of the work. Farmers combine seeds with nutrients to grow crops. Learners combine knowledge with data to grow programs."

- More data beats a cleverer algorithm. Listen up, programmers. We like cool tools. Resist the temptation to reinvent the wheel, or to over-engineer solutions. Your starting point is to Do the Simplest Thing that Could Possibly Work. Quoting Domingos: "Suppose you’ve constructed the best set of features you can, but the classifiers you’re getting are still not accurate enough. What can you do now? There are two main choices: design a better learning algorithm, or gather more data. [...] As a rule of thumb, a dumb algorithm with lots and lots of data beats a clever one with modest amounts of it. (After all, machine learning is all about letting data do the heavy lifting.)"

So knowledge and data are critical. Focus your efforts on those, before fussing about algorithms. In practice, this means that unless you have to increase complexity, you should continue to Do Simple Things; don't rush to neural networks just because they're cool. To improve your model, get more data and use your knowledge of the problem to manipulate the data. You should spend most of your time on these steps. Only optimize your choice of algorithms after you've got enough data, and you've processed it well.

(Chart inspired by a slide from Alex Pinto's talk, "Secure Because Math: A Deep-Dive on ML-Based Monitoring".)

Subscribe to Talking Machines, a podcast about machine learning. It's great. It's a low-effort, high-yield way to learn more.

I suggest this listening order:

- Start with the "Starting Simple" episode. It supports what we read from Domingos. Ryan Adams talks about starting simple, as discussed above. Adams also stresses the importance of feature engineering. Feature engineering is an exercise of the "knowledge" Domingos writes about.

- Then, start over from the first episode

Pick one or two of these IPython Notebooks and play along.

- Face Recognition on a subset of the Labeled Faces in the Wild

- Machine Learning from Disaster: Using Titanic data, "Demonstrates basic data munging, analysis, and visualization techniques. Shows examples of supervised machine learning techniques."

- Election Forecasting: A replication of the model Nate Silver used to make predictions about the 2012 US Presidential Election for the New York Times)

- An example Machine Learning notebook: "let's pretend we're working for a startup that just got funded to create a smartphone app that automatically identifies species of flowers from pictures taken on the smartphone. We've been tasked by our head of data science to create a demo machine learning model that takes four measurements from the flowers (sepal length, sepal width, petal length, and petal width) and identifies the species based on those measurements alone."

- ClickSecurity's "data hacking" series (thanks hummus!)

- Detect Algorithmically Generated Domains

- Detect SQL Injection

- Java Class File Analysis: is this Java code malicious or benign?

- If you want more of a data science bent, pick a notebook from this excellent list of Data Science ipython notebooks. "Continually updated Data Science Python Notebooks: Spark, Hadoop MapReduce, HDFS, AWS, Kaggle, scikit-learn, matplotlib, pandas, NumPy, SciPy, and various command lines."

- Or more generic tutorials/overviews ...

There are more places to find great IPython Notebooks:

- A Gallery of Interesting IPython notebooks (wiki page on GitHub): Statistics, Machine Learning and Data Science

- Fabian Pedregosa's larger, automatic gallery

Pick one of the courses below and do the whole thing.

Prof. Andrew Ng's Machine Learning is a free online course I've seen recommended often. And emphatically.

It's helpful if you decide on a pet project to play around with, as you go, so you have a way to apply your knowledge. You could use one of these Awesome Public Datasets. And remember, IPython Notebook is your friend.

Also, the book Elements of Statistical Learning comes up frequently, but is usually referred to as a "reference" not an introduction. It's free, so download or bookmark it!

Here are some other free online courses I've seen recommended. (Machine Learning, Data Science, and related topics.)

- Machine Learning by Prof. Pedro Domingos of the University of Washington. Domingos wrote the paper "A Few Useful Things to Know About Machine Learning" quoted earlier in this guide. (Thanks to paperwork on Hacker News.)

- Data science courses as IPython Notebooks:

- Practical Data Science

- Learn Data Science (an entire self-directed course!)

- Supplementary material: donnemartin/data-science-ipython-notebooks. "Continually updated Data Science Python Notebooks: Spark, Hadoop MapReduce, HDFS, AWS, Kaggle, scikit-learn, matplotlib, pandas, NumPy, SciPy, and various command lines."

- General Assembly's Data Science course. Interactive. (Older versions: 7, 5, 4, 3.)

- Surveys of Data Science courseware (a bit more Choose Your Own Adventure)

- Check out Jack Golding's survey of Data Science courseware. Includes Coursera's Data Science Specialization with 9 courses in it. The Specialization certificate isn't free, but you can take the courses 1-by-1 for free if you don't care about the certificate. The survey also covers Harvard CS109 which I've seen recommended elsewhere.

- Another epic Quora thread: How can I become a data scientist?

- Data Science Weekly's Big List of Data Science Resources has a List of Data Science MOOCs

- Data Science (Harvard CS109)

- Advanced Statistical Computing (Vanderbilt BIOS8366) -- great option, interactive (lots of IPython Notebook material)

If you're focusing on Python, you should get more familiar with Pandas.

- Essential: 10 Minutes to Pandas

- Essential: Things in Pandas I Wish I'd Had Known Earlier (IPython Notebook)

- Useful Pandas Snippets

- Here are some docs I found especially helpful as I continued learning:

Bookmark these cheat sheets:

- scikit-learn algorithm cheat sheet

- Metacademy: a package manager for [machine learning] knowledge. A mind map of machine learning concepts, with great detail on each.

- Matplotlib / Pandas / Python cheat sheets.

Not repeating the materials mentioned above, here are some more Data Science resources:

- Extremely accessible data science book: Data Smart by John Foreman

- An entire self-directed course in Data Science, as a IPython Notebook

- Data Science Workflow: Overview and Challenges (read the article & the comment by Joseph McCarthy)

- Fun little IPython Notebook: Web Scraping Indeed.com for Key Data Science Job Skills

Check out Gideon Wulfsohn's excellent introduction to Machine Learning for specialized knowledge on many topics... including Ensemble Methods, Apache Spark, Neural Networks, Reinforcement Learning, Natural Language Processing (RNN, LDA, Word2Vec), Structured Prediction, Deep Learning, Distributed Systems (Hadoop Ecosystem), Graphical Models (Hidden Markov Models), Hyper Parameter Optimization, GPU Acceleration (Theano), Computer Vision, Internet of Things, and Visualization.

Here's an IPython Notebook book about Probabilistic Programming and Bayesian Methods for Hackers: "An intro to Bayesian methods and probabilistic programming from a computation/understanding-first, mathematics-second point of view."

For now, the best StackExchange site is stats.stackexchange.com – machine-learning. (There's also datascience.stackexchange.com, but it's still in Beta.) And there's /r/machinelearning. There are also many relevant discussions on Quora, for example: What is the difference between Data Analytics, Data Analysis, Data Mining, Data Science, Machine Learning, and Big Data?

You should also join the Gitter channel for scikit-learn!

For help and community in meatspace, seek out meetups. Data Science Weekly's Big List of Data Science Resources may help you.

The rest of the stuff that might not be structured enough for a course, but seems important to know.

"Machine learning systems automatically learn programs from data." Pedro Domingos, in "A Few Useful Things to Know about Machine Learning." The programs you generate will require maintenance. Like any way of creating programs faster, you can rack up technical debt.

Really essential:

- Surviving Data Science "at the Speed of Hype" by John Foreman, Data Scientist at MailChimp

- 11 Clever Methods of Overfitting and How to Avoid Them

A worthwhile paper: Machine Learning: The High-Interest Credit Card of Technical Debt. Here's the abstract:

Machine learning offers a fantastically powerful toolkit for building complex systems quickly. This paper argues that it is dangerous to think of these quick wins as coming for free. Using the framework of technical debt, we note that it is remarkably easy to incur massive ongoing maintenance costs at the system level when applying machine learning. The goal of this paper is highlight several machine learning specific risk factors and design patterns to be avoided or refactored where possible. These include boundary erosion, entanglement, hidden feedback loops, undeclared consumers, data dependencies, changes in the external world, and a variety of system-level anti-patterns.

And a few more articles:

- The Perilous World of Machine Learning for Fun and Profit: Pipeline Jungles and Hidden Feedback Loops

- The High Cost of Maintaining Machine Learning Systems

So you are dabbling with Machine Learning. You've got Hacking Skills. Maybe you've got some "knowledge" in Domingos' sense (some "Substantive Expertise" or "Domain Knowledge"). This diagram isn't a perfect fit for us, but may get the point across:

Please don't sell yourself as a Machine Learning expert while you're still in the Danger Zone. Don't build bad products or publish junk science. This guide can't tell you how you'll know you've "made it" into Machine Learning competence ... let alone expertise. It's hard to evaluate proficiency without schools or other institutions. This is a common problem for self-taught people.

You need practice. On Hacker News, user olympus commented to say you could use competitions to practice and evaluate yourself. Kaggle and ChaLearn are hubs for Machine Learning competitions. You can find some examples of code for popular Kaggle competitions here. For smaller exercises, try HackerRank.

You also need understanding. You should review what Kaggle competition winners had to say about their solutions, for example, the "No Free Hunch" blog. These might be over your head at first but once you're starting to understand and appreciate these, you know you're getting somewhere.

Competitions and challenges are one way to practice. You shouldn't limit yourself, though.

Here's a complementary way to practice: do practice studies.

- Ask a question. Start your own study. The "most important thing in data science is the question" (Dr. Jeff T. Leek). So start with a question. Then, find real data. Analyze it. Then ...

- Communicate results. When you have a novel finding, reach out for peer review (see below).

- Fix issues. Learn. Share what you learn.

And repeat. Re-phrasing this, it fits with the scientific method: formulate a question (or problem statement), create a hypothesis, gather data, analyze the data, and communicate results. (You should watch this video about the scientific method in data science, and/or read this article.)

How can you come up with interesting questions? Once a week, browse datasets and write down some questions. Also, you should sign up for Data is Plural, a newsletter of interesting data sets. Stay curious. When a question inspires you, start a study.

This advice, to do practice studies and learn from peer review, is based on a conversation with Dr. Randal S. Olson. Here's more advice from Olson, quoted with permission:

I think the best advice is to tell people to always present their methods clearly and to avoid over-interpreting their results. Part of being an expert is knowing that there's rarely a clear answer, especially when you're working with real data.

As you repeat this process, your practice studies will become more scientific, interesting, and focused. The most important part of this process is peer review.

Here are some communities where you can reach out for peer review:

- Cross-Validated: stats.stackexchange.com

- /r/DataIsBeautiful

- /r/DataScience

- /r/MachineLearning

- Hacker News: news.ycombinator.com. You'll probably want to submit as "Show HN"

Post to any of those, and ask for feedback. You'll get feedback. You'll learn a ton. As experts review your work you will learn a lot about the field. You'll also be practicing the skill of accepting critical feedback.

When I read the feedback on my Pull Requests, first I repeat to myself, "I will not get defensive, I will not get defensive, I will not get defensive." You may want to do that before you read reviews of your Machine Learning work too.

If you create software for users, and you want to use machine learning to benefit your users, you must understand your users. I won't get into a whole user experience lesson here, but in short, you must think about user experience.

I have a friend who worked at <Redacted> Music Streaming Service. This company used machine learning in their recommendation and radio services. He complained about the way the company scored the radio feature's performance. There was disagreement about what should be scored. They used a metric, "no song skips." But why? Sure that indicates the recommendation wasn't awful, what if you want to measure engagement? Other metrics could measure positive engagement: "favorites," shares, listening time, or whether the listener returns to the radio station later. Measuring "no skips" might work for the passive listener, but the engaged listener is different. Perhaps the engaged listener will skip 5 songs, but find 20 songs they love and come back to the service later.

My takeaway: user experience matters just as much as ever. You must understand which kind of user you're trying to benefit with your machine learning techniques. (Write user stories!) You must come up with measurements and ways to evaluate success for those users. You must be able to measure before you can optimize.

This has to do with knowledge of the domain, but also may benefit from UX expertise. Work with UX experts if you can.

There was a great BlackHat webcast on this topic, Secure Because Math: Understanding Machine Learning-Based Security Products. Slides are there, video recording is here. Equally relevant to InfoSec and AppSec.

Scaling data analysis is a familiar problem now, and there's no shortage of ways to address it. Beware needless hype and companies that want to sell you flashy, proprietary solutions. You can do it all with open-source tools. Even if you contract it, you consider looking for contractors who use known good stacks. No news here.

Here are some tools to reach for:

- Apache Spark.

- NetflixOSS (see "Big Data Tools")

Also: 10 things statistics taught us about big data analysis

- Bookmark awesome-machine-learning, a curated list of awesome Machine Learning libraries and software.

- TensorFlow seems like a really big deal. People like you will do exciting things with TensorFlow. It's a framework. Frameworks can help you manage complexity. Just remember this rule of thumb: "More data beats a cleverer algorithm" (Domingos), no matter how cool your tools are. Also note, TensorFlow is not the only machine learning framework of its kind: Check this great, detailed comparison of TensorFlow, Torch, and Theano. See also Newmu/Theano-Tutorials and nlintz/TensorFlow-Tutorials.

- Bookmark Pythonidae, a curated list of awesome libraries and software in the Python language.

- Bookmark Julia.jl, a curated list of awesome libraries and software in the Julia language.

- For Machine-Learning libraries that might not be on PyPI, GitHub, etc., there's MLOSS (Machine Learning Open Source Software). Seems to feature many academic libraries.

Here are some other guides to Machine Learning. They can be alternatives or complements to this guide.

- Machine Learning for Developers is another good introduction. It introduces machine learning for a developer audience using Smile, a machine learning library that can be used both in Java and Scala.

- Materials for Learning Machine Learning by Jack Simpson

- How to Machine Learn by Gideon Wulfsohn

- Example Machine Learning notebook, exercise, and guide by Dr. Randal S. Olson. Mentioned in Notebooks section as well, but it has a similar goal to this guide (introduce you, and show you where to go next). Rich "Further Reading" section.

- [Your guide here]